UC Berkeley MEng

292C: Human-AI Design Methods Spring 2026

Team:

Project Overview

Buddi is a biomorphic smart-home device shaped like a small potted plant. It senses indoor environmental conditions — temperature, humidity, CO₂ — and communicates them through physical leaf movements. When the room is healthy, the leaves stand upright. When conditions decline, they droop. No screen, no app, no numbers. Just a plant that wilts when your air isn't good.

The idea is simple, but the design problem behind it isn't: how do you get someone to care about something they can't see, don't understand, and don't prioritize, without adding another notification to their life?

People-First Approach

·

Reliability You Can Count On

·

A Focus on Quality

·

People-First Approach · Reliability You Can Count On · A Focus on Quality ·

The Brief

For my Human-Centered Design course at UC Berkeley's Jacobs Institute, my team set out to explore indoor environmental health — specifically, the gap between what's happening in your air and what you actually do about it. Over the course of the semester, we conducted interviews, observational studies, and extensive synthesis work to arrive at a product concept, then built it into a functional prototype that we presented and tested at the Jacobs Design Showcase.

My contributions spanned the full arc of the project: I conducted a semi-structured interview and an in-context observational study during the research phase, contributed to synthesis and concept selection, and then led circuit design, sensor calibration, UI/UX, and mechanical prototyping through to the final deliverable. This page walks through my design process from beginning to end.

The Problem with Indoor Air

Indoor environmental health is invisible and abstract. Aspects such as temperature, humidity and CO₂ matter for comfort and long-term wellbeing, but they don't announce themselves the way a dirty kitchen or a pest infestation does. Current smart-home solutions try to solve this with dashboards and data, but our early research suggested that more information might not be the answer. (As someone who's spent hours staring at sensor readouts while debugging ESP32 projects, I can confirm: more data does not automatically mean more understanding.)

As a team, we framed a central question:

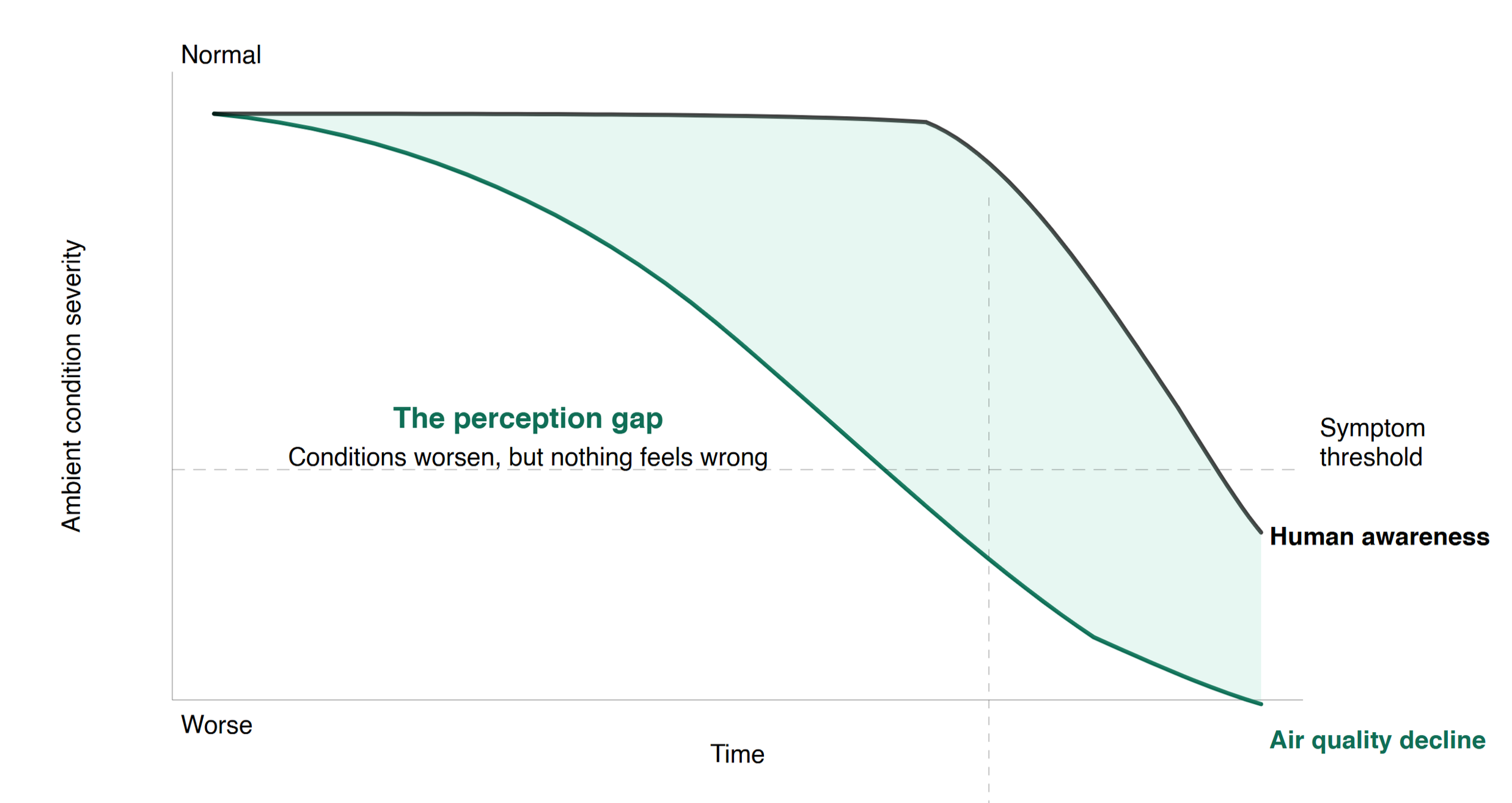

How might we translate invisible indoor environmental data in a way for residents to understand a declining room state before physical symptoms and signs emerge?

Before we could design anything, we needed to understand how people actually relate to their indoor environment. Not just in theory, but in practice.

I contributed two primary pieces of research: a semi-structured interview with an individual living in a shared apartment, and an in-context observational study of someone cooking in a space with poor ventilation. Both ended up shaping the product direction in ways I didn't expect going in.Researching the Product Space

The Interview

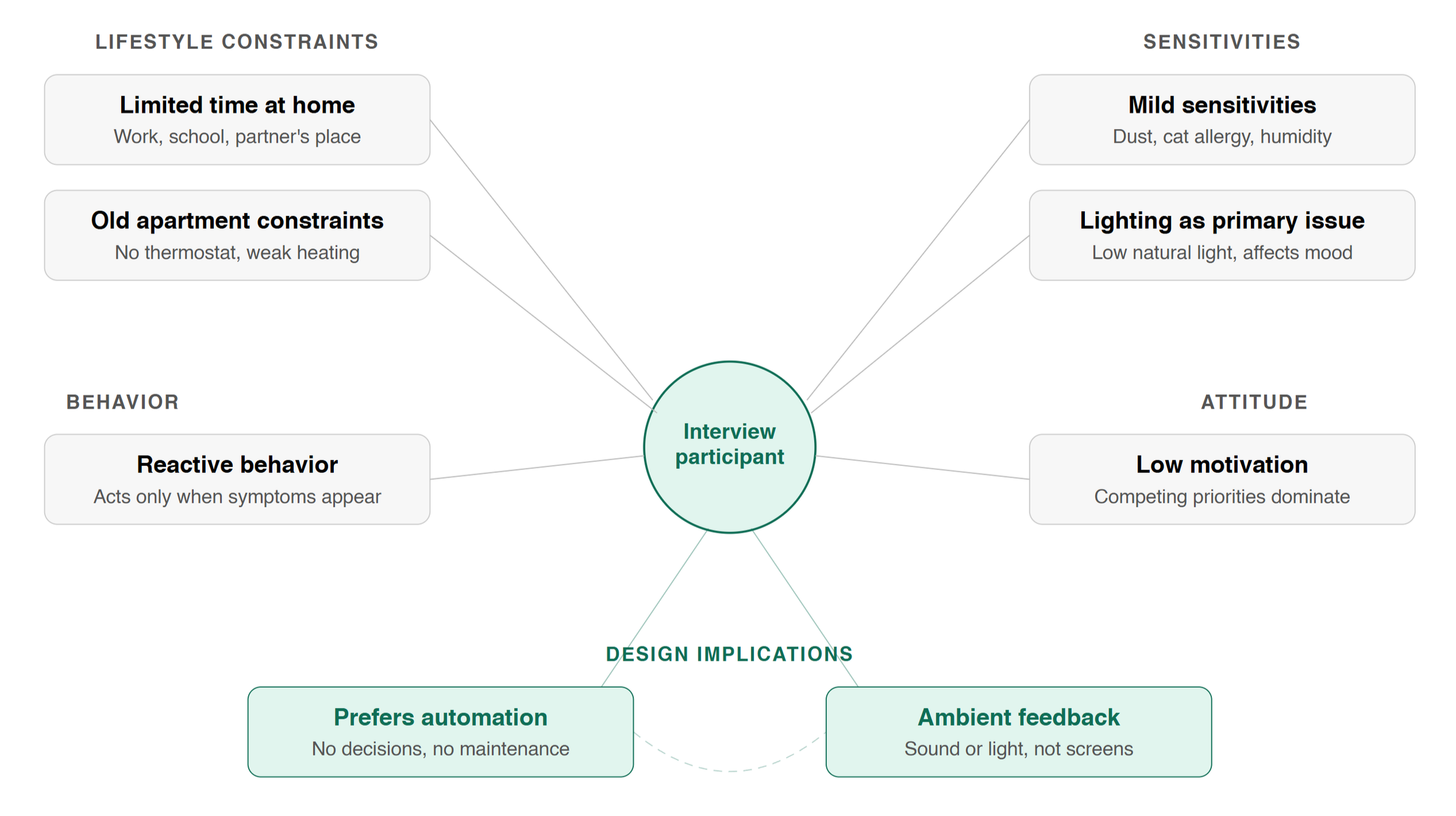

I interviewed a 22-year-old master's student living in an older San Francisco apartment with two roommates. She was a busy person; between work, school, and her partner's place, she was rarely home. I expected to hear complaints about air quality or temperature. Instead, what I got was something closer to indifference.

She had mild sensitivities — dust made her sneeze, her roommate's cat triggered eye irritation, her room got stuffy — but her response to all of it was the same: wait for it to pass, or do something minimal like opening a window. She'd previously owned an air purifier but gave up on it because it was bulky and the filter changes were annoying. When I asked what she'd change about her environment, she said the lighting. Not the air. Not the temperature.

Two things from this interview stuck with me. First, when I asked how she'd want a device to communicate environmental information, she didn't want a screen or numbers, since she would rather have sound or lighting cues, something she wouldn't have to actively monitor. Second, she wanted whatever it was to be automatic. She explicitly said she didn't want to make decisions about her environment. She wanted something that handled it so she didn't have to think.

I used AI tools to process the transcript afterward, extracting keywords, clustering themes, analyzing emotional tone. The synthesis confirmed what I'd felt sitting across from her: this wasn't someone suffering from a problem. This was someone who didn't have the bandwidth to care. That distinction turned out to be the most important insight of my entire research phase. You can design a solution for someone who's frustrated. Designing for someone who's indifferent requires a fundamentally different approach.

The Observational Study

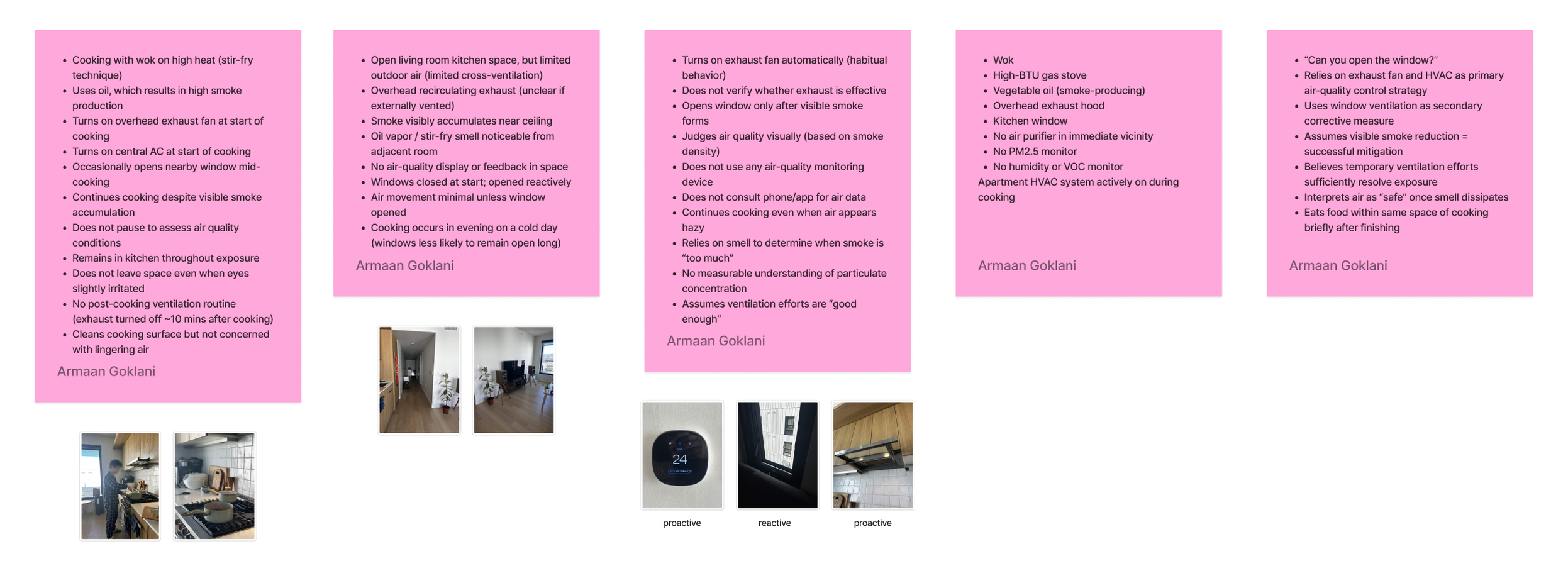

My second research contribution was an in-context observation. I watched a participant cook with a pot on high heat and stir-frying with oil, which produces significant visible smoke.

I documented everything: the environment, the sequence of actions, and the gaps between what the participant could have done and what they actually did. The kitchen was an open living room setup with limited cross-ventilation. The overhead exhaust was recirculating. Windows were closed at the start. Smoke accumulated near the ceiling. Oil vapor was noticeable from the adjacent room.

The participant turned on the exhaust fan at the start of cooking automatically, out of habit, not because they'd assessed the air. They opened a window partway through, but only after smoke was visually obvious. They continued cooking despite the haze, didn't leave the space even when their eyes were slightly irritated, and turned the exhaust off about 10 minutes after finishing. There was no post-cooking ventilation routine. They cleaned the cooking surface but didn't seem concerned about lingering air quality.

I coded the behavioral patterns I observed: exhaust fan use was habitual, not deliberate. The participant judged air quality by smoke density and smell, not by any measured standard. They assumed visible smoke reduction meant the air was fine. They relied on the exhaust fan and HVAC as their primary strategy and treated window ventilation as a backup. They interpreted the air as "safe" once the smell went away. They ate in the same space shortly after cooking.

This observation crystallized something the interview had hinted at: people don't manage air quality, they manage perceptible symptoms. Smoke they can see, smells they can notice, irritation they can feel. Everything below that threshold goes unaddressed. Any product that communicates through numbers or data is fighting against this reality. A product that makes the invisible perceptible, through a channel as intuitive as a plant wilting, instead works with it.

Connecting the Dots

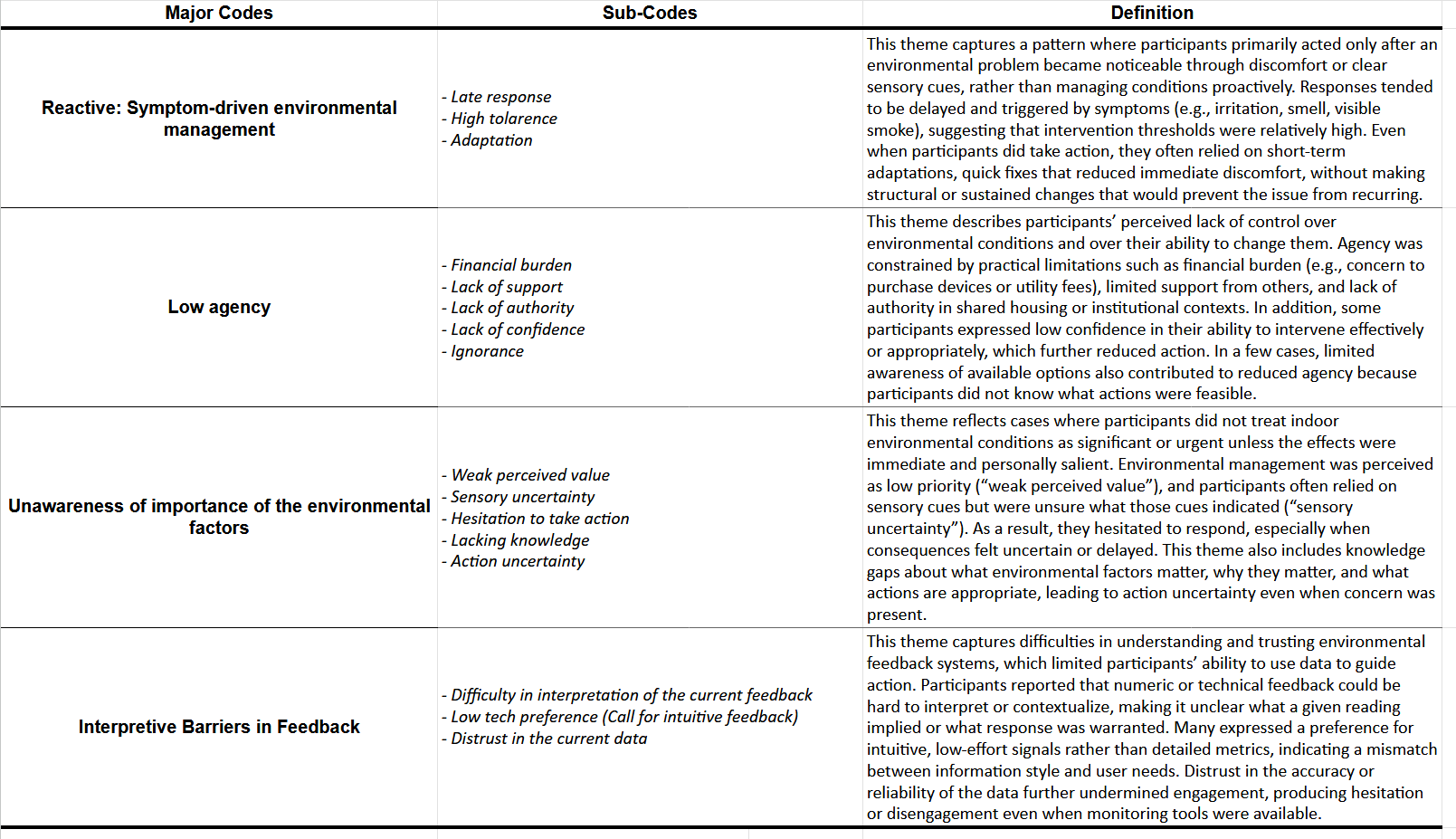

My findings fed into the team's broader synthesis alongside Semina's observational studies and everyone else's interviews. Together, we transcribed and coded the data through affinity mapping and inductive line-by-line open coding, clustering excerpts into themes directly from the language participants used.

Four key insights emerged across the full dataset. Environmental management was reactive and symptom-driven. People intervened only after discomfort, never proactively. Users reported low agency, feeling constrained by shared living situations, building limitations, or competing priorities. There was a widespread unawareness of environmental factors unless effects were immediate or disruptive. And participants struggled with interpretive barriers, such that numeric indicators didn't translate into confident action.

These weren't abstract findings for me. My interview participant was almost a textbook case of low motivation and reactive behavior. She managed her environment only when symptoms demanded it and explicitly deprioritized it against school and work. My cooking observation was a clear case of symptom-driven management combined with total unawareness of invisible factors like particulate concentration. And honestly, coding someone else's cooking habits while knowing I do the exact same thing was a humbling exercise in self-awareness.

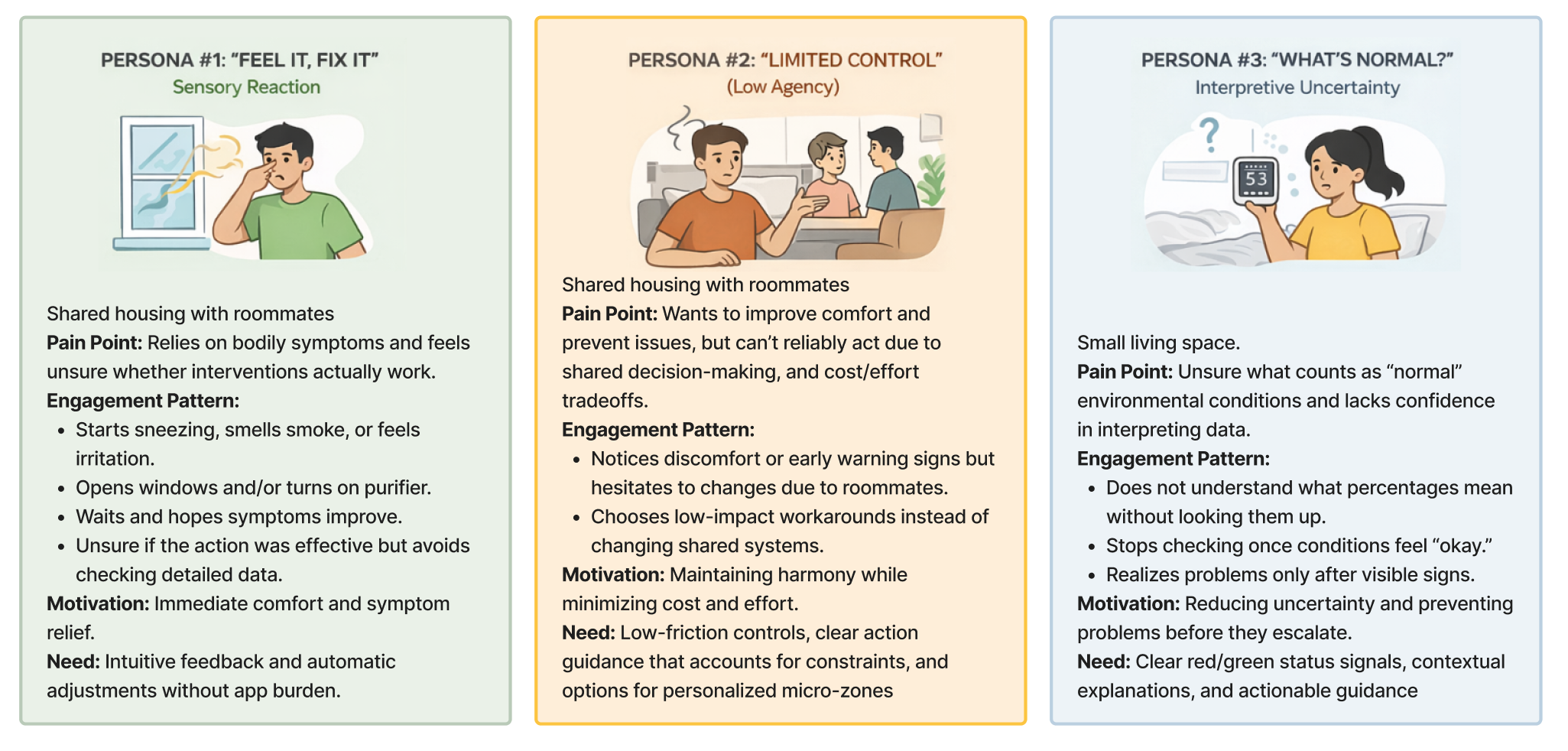

The team also constructed three AI-assisted personas grounded in this data. The one that resonated most with my research was Persona #2: Limited Control, someone who wants to improve comfort but can't reliably act due to shared decision-making, cost concerns, and competing priorities. They need low-friction controls and clear guidance that accounts for real-world constraints. My interview participant was practically the case study for this persona.

Concept Generation and Selection

Once we had our insights locked in, it was time to figure out what to actually build. The team used an AI-augmented process to go wide first and narrow later. We generated a big pool of concepts, and each of us went deep on our own before bringing everything back to the group.

Below are some of the concepts I personally generated during this phase. You can see the range we were working with, from the practical to the ambitious to the "probably not buildable in a semester but fun to think about."

To make sense of all the ideas across the team, we ran them through bubble grids plotting feasibility against impact, t-SNE semantic maps built from text embeddings of the concept descriptions, and cluster analysis to see which ideas were actually saying the same thing in different ways. The t-SNE visualization was one of those moments where the ML background from my other coursework unexpectedly paid off. Seeing concept clusters emerge spatially made the selection process feel far more grounded than a spreadsheet ever could.

We scored every concept against four requirements that came directly from the research: does it sustain engagement over time (not just feel novel on day one), is it realistic to deploy, can it influence behavior without guilt-tripping or punishing the user, and does it create long-term impact rather than a short-term wow moment.

The concept that came out on top was a behavior-shaping artifact. Something that communicates through physical feedback and gentle reinforcement rather than screens or push notifications. The system follows a sense, predict, actuate loop. It senses environmental conditions and user interaction patterns. It predicts whether conditions are declining through a lightweight rule-based inference engine (we deliberately chose interpretability over precision here). And it actuates through physical leaf movements designed to nudge behavior without becoming repetitive or annoying.

This architecture mapped directly onto what my research had surfaced. My interview participant wanted automation and ambient cues, not data. My cooking observation showed that reactive symptom management was the default. The whole point of the sense, predict, actuate loop was to intervene before symptoms show up, using a feedback channel that nobody needs to learn how to read. A plant that droops when something's wrong. That's it.

LET’S GET STARTED

Quisque iaculis facilisis lacinia. Mauris euismod pellentesque tellus sit amet mollis.